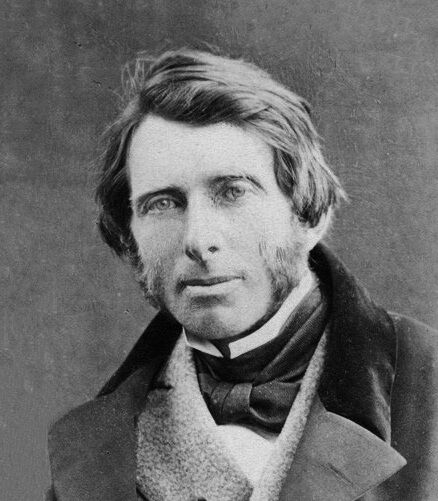

In 1907, Dr. George McAnelly Miller and his brother-in-law Albert Peter Dickman relocated their families to the shore of Tampa Bay, Florida. They purchased land and started to set up homes, a saw mill, and a school. The Ruskin Commongood Society platted Ruskin on February 19, 1910. Lots were apportioned for a college, business district, two parks, and for the founding families, with only Whites allowed to own or lease land in the community.

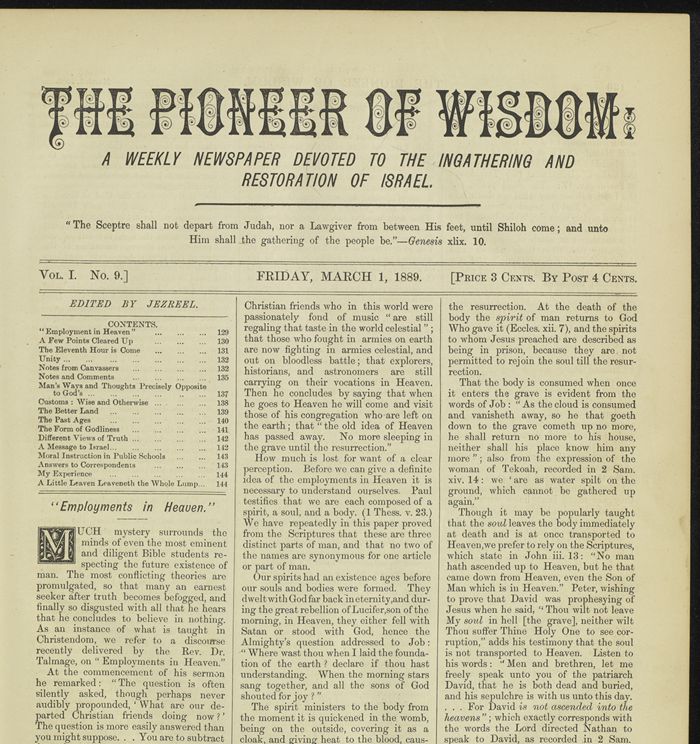

The Millers began Ruskin College in 1910 with Dr. Miller and his wife Adeline serving as president and vice-president. By 1913, the community had a cooperative general store, a canning factory, a telephone system, an electric plant supplying electricity to both public and private buildings, a weekly paper, and regular boat freight and passenger service to Tampa. The onset of World War I hastened the demise of the college, which was destroyed by fire in 1918. Dr. Miller died in August 1919.